Archive

Get Answers about ITIL

ITSkeptic recently launched a new section on the website called “Answers from the ITIL Wizard”. This is a section that can be used to get first hand answers for all questions related to ITIL from an experienced (read 12-15 years of industry experience) consultant.

Click here for the page.

What makes this page all the more interesting is a disclaimer at the end of the page which says that “Look, we shouldn’t really have to say this but for the critically challenged amongst our readers: please be aware that The ITIL Wizard is satire. It is not meant to be taken seriously, it should not be used as advice on ITIL or anything else, it is frequently wrong (though occasionally alarmingly right).“

On the contrary, I really liked whatever responses that I read so far. They made more sense than the typical ITIL Gyanguru responses!

The Paradox of the 9s by Hank Marquis

So I had something on 6, 7, and 8 yesterday. Today is the turn of 9.

Hank in this version of the newsletter from DITY, talks about how many 9s do the customers actually need when it comes to availability.

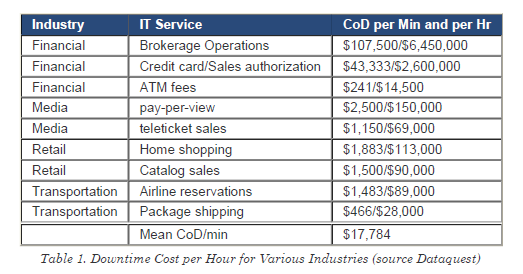

Cost of Downtime (Source : DITY Newsletter)

What this newsletter talks about is three things

- Why should an organization care about availability and has some interesting statistics on CoD (Cost of Downtime)

- How to measure availability, and

- Translating business needs into 9s

7 Dirty Little Truths About Metrics from DITY

Looks like today is a number day! The previous post was with the number 6 and this one with the number 7.

Hang Marquis in this weekly newsletter from DITY (Do IT Yourself) writes some truths about Metrics followed by a simple checklist to create metrics that matter.

Click here to read the article or here to download a PDF. For my own benefit, here is the checklist that he has written in his article:

- Align with Vital Business Functions. Regardless of the IT activity, you need to make sure your metrics tells you something about the VBF that depends on what you are measuring.

- Keep it simple. A common problem manager fault is overloading a metric. That is, trying to get a single metric to report more than one thing. If you want to track more than one thing, create a metric for each. Keep the metric simple and easy to understand. If it is too hard to determine the metrics, people often fake the data or the entire report.

- Good enough is perfect. Do not waste time polishing your metrics. Instead, select metrics that are easy to track, and easy to understand. Complicated or overloaded metrics often require excessive work, usually confuse people, and do not get used. Use a tool like the Goal Question Metric (GQM) model to clarify your metrics.

- Use metrics as indicators. Key Performance Indicators are metrics! A KPI does not troubleshoot anything, but rather the KPI indicates something is amiss. A KPI normally does not track or show work done. Satisfying several KPI normally means satisfying the related CSF. The KPI is an indicator, a metric designed to alert you that CSF attainment might be in jeopardy, that is all. A good metric (KPI) is just an excellent indicator of the likelihood of attaining a CSF.

- A few good metrics. Too many metrics, even if they are effective, can overwhelm a team. For any processes 3 to 6 CSFs are usually all that is required. Each CSF might have 1 to 3 KPI. This means most teams and individuals might have just 2-5 metrics related to their activities or process. Any more and either the metrics won’t get reported, or the data gets faked. Too many metrics transforms an organization into a reporting factory — focusing on the wrong things for the wrong reasons. In either case, the usefulness of the metric is compromised.

- Beware the trap of metrics. Failure to follow these guidelines invariably results in process problems. Look around your current organization.

And talking about number 8, here is a link to another post that talks about 8 features of a successful real time dashboard.

Project Failure and Success

Link to Six reasons of project failure. I got this article on a post written by Michael Kringsman where he has referenced this blog post by Michiko Diby. Michiko in his post has talked about the 6 reasons of project failure in detail and also has links to explain the reasons.

The reasons which have been highlighted in these posts are:

- Intent Failure – Occurs when the project doesn’t bring enough added value or capability to beat down the obstacles inherent throughout the process. This suggests the original intent of the project was flawed from the beginning.

- Sponsor Failure – Occurs when the person heading up the project is not actively engaged and/or does not have the authority to make decisions critical to project success.

- Design and Definition/Scope Failure – Occurs when the scope is not clearly defined, so the project team is unclear on deliverables.

- Communications Failure – Occurs when communications are infrequent or honest discussion of project problems and issues are avoided.

- Project Discipline Failure – Occurs when process/project methodology is allowed to lapse so that the mitigation factors inherent in the process are never used.

- Supplier/Vendor Failure – Occurs when the structure of supplier /vendor relationships doesn’t allow for communication and adjustments.

In addition to these, here are a few that I added in the comments:

- Change Process failure – where the scope and deliverables keep changing and there is no set/agrees change process to update the scope

- Skill Failure – when the right skills are not identified for a task and it takes longer than expected

- Stakeholder identification failure – where the reviewing authority/decision making authority is clearly identified

- Turnaround time failure (similar to Project Discipline Failure) – where the participants other than the key project team members fail to revert back in time

Michael Kringsman has a post on IT project success as well which can be read here.

Text in italics is from Michiko Diby’s post, and the clip-art is Microsoft PowerPoint

List of Links from IT Skeptic!

I have been reading IT Skeptic for a while and I love that blog! Here is the link to a post from IT Skeptic that lists down some other recommended blogs/websites for ITSM.

COBIT 4.1 – Control Objectives of Information and related Technology

Click here for available downloads from the ISACA website.

Real world experiences of CMDB success

A very good article and presentation on CMDB Success by Gaurav Uniyal, a lead consultant with Infosys Technologies. In this presentation, and post, Gaurav touches upon some of the key practices to be kept in mind while designing and implementing a Configuration Management solution.

- Know what could be delivered and how

- Baseline current processes and technology landscape

- Structured Requirements Gathering

- Analyze impact of the roll-out

- Develop processes to extract value out of the roll-out

Click here to read through the post or see the presentation.

Numbered points have been taken from Infosys ITSM Blog

Make notes!

A wonderful Dilbert comic to keep things light on a Monday!

I think Scott Adams is one of the best teachers!

Responsible and/or Accountable

There are specific differences in the dictionary meaning of words accountability and responsibility. In the ITIL world, we also use these terms and often in different contexts too…

When we write accountability, we are referring to a role which oversees a specific function, action or responsibility

When we write responsibility, we are referring to a role which is actually performing the function, action or activity within a process

e.g. the roles of Process Coordinators vs. Process Owners… Mostly Process Owners would be accountable for the process quality and adoption, however its the process coordinator who is actually ensuring process quality by continuously looking at the data, identifying training needs and establish error correction plans…

In one of the projects that I worked on to improve process definition and introduce process updates, a role of Outcome Manager was defined. I like that term! It simply mentions the responsibility of that role as soon as you read it! I hope to have such relevant roles attached to ITIL Process documentation too!

Words like these are commonly referred to in the RACI charts, which are also called SOD (Separation of Duty) Matrix by some organizations.

RACI

Read a few more thoughts on RACI in ITIL by ITSMwatch columnist David Mainville here and a good article on wiki here.

Recent Comments